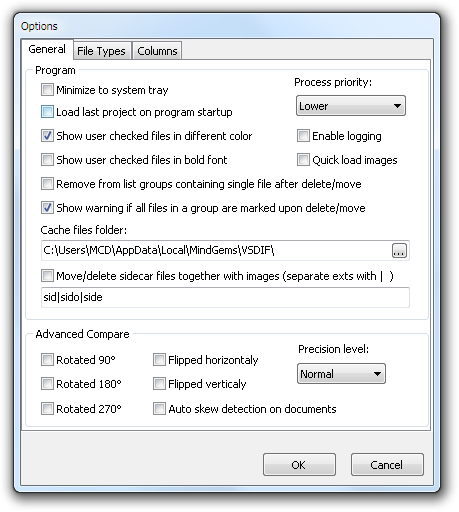

Medical images differ from images of everyday objects in ways that we hypothesized could be leveraged for a new instance selection approach. Also, the majority of work on instance selection involves non-imaging datasets and precedes recent developments in DL. Instance selection has been shown to reduce training-set sizes in many settings, but again which criteria perform best seems to depend on the type of model and dataset. Image-selection criteria generally balance some measure of the informativeness of a candidate image with some measure of how much that image will add to the diversity of the resulting training set 23. In contrast, instance selection involves choosing images based only on their relationships to the rest of the images in the pool and growing the training set, avoiding the computational cost of iterative retraining. Iterative model retraining can make active learning computationally intensive for DL, a drawback somewhat alleviated by selecting images in batches instead of individually (at the cost of making learning less “active”) 29. These investigations have been fruitful but have not identified a universal best performer across datasets and modeling approaches. In image classification many selection criteria have been evaluated, including measures of the uncertainty of the model’s classification of a candidate image, the image’s contribution to the training set’s entropy, and the image’s representativeness of the pool 27, 28. The best-performing instance is then added to the training set, a new model is trained on the now-larger training set, and the cycle is repeated. The remaining unlabeled data are evaluated according to the model, and some selection criterion is applied. an image classifier) is trained on a labeled subset of data from a larger unlabeled pool (e.g. Another well-established form of data selection is active learning 25, 26. The challenge is determining which training data to prioritize. It has long been recognized that prioritizing training data that most benefits model performance, instance selection, as opposed to choosing data at random, should reduce the labeling burden for DL 23, 24. This is in contrast to labeling for DL in non-medical fields, which usually focuses on everyday objects and therefore can be performed more quickly and inexpensively by laypeople via crowdsourcing 22. As a result, labeling can be a costly and time-consuming bottleneck for DL in medical imaging. Even when semi-supervised or unsupervised methods are used to train a DL model, or when weak labels are used, experts can still be needed to label images in test datasets, in order to benchmark performance on high-stakes medical tasks. Labeling and annotation may even require agreement from multiple experts before assigning a gold-standard label 3. However, DL requires labeled data, and labeling and annotation require those same experts. DL thus has great promise for helping meet the overwhelming need for accurate, reliable, and scalable image interpretation that currently exists due to a near-universal shortage of trained experts 5, 6, 18– 21. DL models can classify images by disease or structure and can segment, track, and measure structures within images.

Deep learning (DL) has been applied with success in proofs of concept across biomedical imaging modalities and medical specialties 1– 17.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed